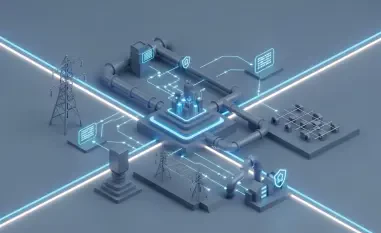

The rapid expansion of the artificial intelligence ecosystem has created a landscape where speed often outpaces safety, leaving critical infrastructure vulnerable to systemic architectural flaws. Anthropic recently introduced the Model Context Protocol as an open-source standard designed to bridge the gap between large language models and external data sources, yet security researchers have uncovered a critical vulnerability within its core design. This specific flaw, identified by experts at Ox Security, threatens the integrity of approximately 150 million downloads and more than 200,000 active instances across the global tech landscape. The issue resides deep within the protocol’s standard input and output interface, which facilitates communication between the artificial intelligence and local server processes. Because this mechanism serves as the foundation for connecting sensitive internal databases to model logic, any inherent weakness can provide a direct pathway for unauthorized actors to bypass traditional security perimeters and access protected environments.

Architectural Vulnerabilities in AI Integration

The Mechanics of Arbitrary Command Execution

At the heart of the security concern is the discovery that the Model Context Protocol allows for arbitrary command execution due to a fundamental lack of input sanitization. Researchers found that the system executes specified commands regardless of whether the initial process starts successfully, which creates a dangerous loophole where malicious code can run even if it triggers an error message. This behavior effectively bypasses the defensive layers that developers expect from a modern communication protocol, enabling attackers to gain deep access to internal systems. Once an exploit is triggered, an unauthorized user could potentially extract sensitive user data, retrieve API keys stored in environment variables, or even download entire chat histories from protected sessions. In the most extreme scenarios, this vulnerability permits a total system takeover, giving external entities full control over the host machine. The absence of built-in warning flags or automated sanitization routines makes it difficult for automated tools to detect these threats before they are deployed.

Inherited Risks in the Software Development Kit

One of the most troubling aspects of this vulnerability is its presence within the Software Development Kits provided for popular programming languages including Python, Java, and Rust. Because these foundational libraries are used by thousands of organizations to build their applications, the security risk is effectively baked into the very infrastructure of the modern artificial intelligence supply chain. Developers who adopt the protocol to streamline their data integration efforts often do so under the assumption that the underlying standard is inherently secure. However, by inheriting these flawed SDKs, they unknowingly introduce significant exposure points into their production environments without any immediate indication of a compromise. This systemic nature of the flaw means that a single architectural oversight at the protocol level translates into a widespread risk across diverse industries. The widespread adoption of these languages ensures that the potential attack surface is vast, requiring a comprehensive re-evaluation of how services interact with local system resources.

The Conflict Over Protocol Security Standards

Differing Perspectives on Shared Responsibility

A significant point of contention has emerged between the creators of the protocol and the security researchers who identified the flaw, highlighting a broader debate about responsibility. Anthropic has formally characterized the observed behavior as expected and argued that the protocol remains secure by default as long as developers implement their own sanitization measures. This stance shifts the burden of security entirely onto the end-user, a position that many cybersecurity experts find problematic given the complexity of modern software ecosystems. Critics argue that relying on individual developers to secure every possible input point is a recipe for failure, especially when the underlying protocol provides the tools for exploitation. These experts suggest that security should be a primary feature of the infrastructure itself, rather than an optional configuration left to the discretion of those building on top of it. This disagreement underscores the friction between the need for rapid innovation and the necessity of building resilient, self-defending systems.

Proactive Measures and Long Term Resilience

To address the immediate dangers posed by the Model Context Protocol, the security community moved toward proactive mitigation strategies rather than waiting for a central patch. Security researchers issued over thirty responsible disclosures to affected organizations and identified more than ten high-severity Common Vulnerabilities and Exposures to provide a clear roadmap for remediation. These actions allowed individual project maintainers to implement custom security filters and input validation routines, effectively shielding their specific instances from potential exploitation. The industry recognized that the fragility of foundational standards like this protocol served as a vital wake-up call for the global community. Moving forward, the focus shifted toward establishing more robust, infrastructure-level security protocols that prioritized default protection over developer-led configuration. Organizations were encouraged to adopt rigorous audit cycles for all third-party integrations and to maintain a skeptical posture toward automated command execution pathways.