Malik Haidar stands at the intersection of deep-tier technical analytics and high-level corporate security strategy. With years of experience protecting multinational interests from sophisticated threat actors, he has become a leading voice in how organizations must evolve to meet the rapid-fire pace of modern vulnerability discovery. Today, we explore the shifting dynamics of the CVE Program, the controversial role of AI-driven research, and the immense pressure placed on global disclosure infrastructures as the volume of security flaws reaches unprecedented levels.

The CVE program is currently experiencing a sharp rise in reports, with daily averages climbing from 132 to 174. How can the existing infrastructure handle a potential surge to 70,000 annual disclosures, and what specific technical or staffing adjustments are necessary to maintain the integrity of these records?

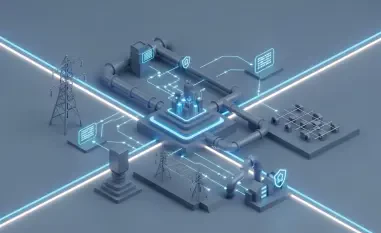

The jump from 132 to 174 daily reports is not just a statistical uptick; it represents a crushing weight on the analysts who must verify each entry to maintain the program’s 327,000 unique records. To handle a forecast of 70,135 annual disclosures, we have to move beyond manual triage and embrace the same automation that is currently driving the surge. While funding for the program is secure, the technical bottleneck remains the coordination of disclosures across the now 502 registered numbering authorities. We need to streamline the data ingestion pipeline so that human experts are only touching the most complex, high-impact flaws while automated systems handle the routine administrative metadata. It feels like trying to hold back a flood with a garden hose, but by expanding the CVE Researcher and Consumer Working Groups, we can distribute the burden of integrity across a wider, more resilient network.

Organizations like Anthropic and OpenAI are developing models capable of autonomously discovering thousands of zero-day vulnerabilities or chaining flaws in the Linux kernel. What specific criteria should determine if these AI firms become official CVE Numbering Authorities, and how would their participation change the disclosure timeline?

The ability of models like Claude Mythos to find thousands of previously unidentified zero-days is a game-changer that necessitates bringing these AI firms into the fold as official CVE Numbering Authorities. The criteria for their participation should be rooted in their ability to provide high-fidelity documentation and their commitment to a coordinated disclosure timeline that doesn’t overwhelm software maintainers. If these firms join the ranks of the 500-plus contributors, we could see the disclosure timeline shrink from weeks to mere hours, which is both exhilarating and terrifying for those on the defense side. Imagine the sheer speed of identifying a flaw in the Linux kernel and having a CVE assigned almost instantly; it forces a rhythm of patching that most organizations aren’t yet equipped to dance to. We must ensure these companies aren’t just “finding” bugs but are actively integrated into the response and coordination branch to ensure responsible handling.

New tools such as GPT-5.4-Cyber and Claude Mythos are often restricted to vetted groups like Project Glasswing or trusted defense programs. What are the practical risks of keeping these AI-driven discovery capabilities private, and how can the industry ensure “well-defended systems” actually benefit from these automated findings?

Limiting tools like GPT-5.4-Cyber to a tiny circle, such as the 40 members of Project Glasswing, creates a dangerous intelligence vacuum for the rest of the security community. While the intent is to prevent these powerful tools from falling into the wrong hands, the practical risk is that defenders of “well-defended systems” are left guessing about the vulnerabilities these models have already uncovered. We need a mechanism where the insights from these simulations can be safely abstracted and shared with broader defense programs without leaking the underlying exploit logic. There is a palpable tension between the need for secrecy and the reality that attackers will eventually develop their own versions of these tools. If we don’t find a way to democratize the benefits of these automated findings, we are essentially leaving the majority of the world’s infrastructure to fend for itself against an invisible, AI-augmented threat.

Large language models are increasingly used to find both high-value vulnerabilities and lower-quality bugs. What step-by-step validation process should be implemented to filter out AI-generated “noise,” and how can human researchers best collaborate with these tools to prioritize critical infrastructure flaws?

The “noise” generated by AI—those lower-quality bugs that don’t pose a real threat—can be just as damaging as an actual exploit because it drains precious human resources. A robust validation process must start with automated sandboxing where the AI-discovered flaw is tested for reachability and impact before a human ever sees it. Researchers should then step in to perform a “sanity check” on the logic, focusing their high-level expertise on vulnerabilities that allow for privilege escalation or remote code execution in critical systems. This collaboration works best when the AI acts as a scout, flagging potential minefields, while the human researcher acts as the specialist who decides which threats require an immediate tactical response. It’s a sensory experience of sifting through haystacks of data to find the one needle that could actually bring down a server, and that intuition is something AI still struggles to replicate perfectly.

European regulators have suggested that the security capabilities of AI models should be fully disclosed before they reach the commercial market. What specific transparency metrics should AI developers provide during the testing phase, and how would this proactive disclosure protect end-users from immediate exploitation?

Transparency is the only way to prevent users from being put at risk, and AI developers should be required to disclose metrics related to “exploitability ceilings”—essentially, what is the most complex flaw the model can autonomously exploit? We need to know if a model can chain vulnerabilities together in a simulation environment before it is ever released to the public or even a “trusted” group. Proactive disclosure would allow organizations to harden their systems against the specific types of attacks the model is known to be proficient at, creating a preemptive shield. It is about shifting the “right of way” in cybersecurity; instead of waiting for a product to be pushed to market and then finding the holes, we demand that the holes found during testing are the roadmap for our defense. This regulatory push by ENISA ensures that the rapid 45.6% growth in reported vulnerabilities is met with an equally rapid and transparent defensive posture.

The CVE program has recently surpassed 500 contributors while expanding its international presence through new researcher working groups. How does this diversification strategy impact the speed of vulnerability patching, and what specific hurdles do international partners face when coordinating with US-based agencies like CISA?

Reaching the milestone of 502 CNAs is a massive win for diversification, as it brings in local expertise from European-based authorities and diverse practitioner groups. This expansion speeds up patching by placing the power of disclosure closer to the source of the software, but the hurdles of international coordination are still very real, especially when US-based agencies like CISA face administrative challenges like government shutdowns. These shutdowns complicate spending for outreach and can stall the decision-making processes necessary for global synchronized patching efforts. International partners often have to navigate different legal frameworks and reporting requirements, which can feel like trying to coordinate a global orchestra where everyone is reading from a slightly different score. Despite these frictions, the internationalization of the program is our best defense against the borderless nature of AI-driven cyber threats.

What is your forecast for the CVE Program?

I predict that the CVE Program will undergo a fundamental transformation where it evolves from a static record-keeping system into a dynamic, real-time intelligence hub driven by AI-to-AI communication. By the end of this year, we are likely to hit that 70,135 CVE mark, a 45.6% growth that will finally force the full integration of major AI firms like OpenAI and Anthropic into the core of our disclosure infrastructure. We will see a shift where the “human in the loop” becomes a “human on the loop,” overseeing automated systems that identify, log, and even suggest patches for vulnerabilities within minutes of discovery. While the volume of data will be overwhelming, the precision of our defenses will increase exponentially, provided we maintain the transparency and international cooperation that has become the program’s new bedrock.