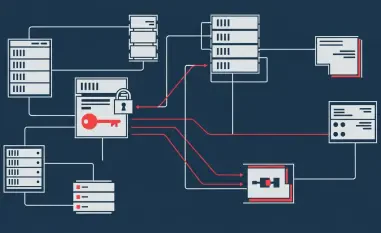

The digital backbone of global commerce rests on the silent, persistent movement of data across borders, yet a single flaw in file transfer protocols can dismantle years of institutional trust in a matter of seconds. Today, managed file transfer systems like SolarWinds Serv-U act as the nervous system for enterprise data logistics, carrying everything from proprietary designs to sensitive employee records. As organizations become more interconnected, the security of these gateways determines the resilience of the entire corporate ecosystem. A breach at the root level does not merely expose a single server; it provides a skeleton key to the entire kingdom of a modern corporation.

SolarWinds occupies a dominant position in the network management landscape, making its software a high-value target for sophisticated threat actors. The Serv-U platform is integrated deeply into infrastructure where 24/7 uptime is a non-negotiable requirement. Consequently, the discovery of vulnerabilities that bypass traditional defenses sends ripples through the global supply chain, forcing a re-evaluation of how administrative interfaces are shielded. Ensuring that root-level access remains impenetrable is no longer just a technical hurdle but a fundamental requirement for maintaining business continuity in a volatile threat environment.

The High Stakes of Secure Managed File Transfer in Modern Enterprise

The critical role of file server software in global infrastructure cannot be overstated, as it facilitates the seamless exchange of data that fuels modern industry. These systems are often the primary point of ingress and egress for sensitive information, making them prime targets for those seeking to disrupt or spy on corporate operations. When a major market player like SolarWinds identifies a flaw, the significance extends beyond a simple software bug; it represents a systemic risk to the interconnected corporate ecosystems that rely on these tools for daily survival.

Securing these repositories requires a deep understanding of how root-level access can be abused to bypass even the most robust perimeter defenses. Because these servers often sit at the edge of the network, they must be hardened against an array of sophisticated attacks that target the underlying operating system. The challenge lies in maintaining this security without sacrificing the performance and accessibility that global data logistics require. Protecting the integrity of these systems is essential for preventing the lateral movement of attackers who seek to escalate their privileges once they gain a foothold.

Evolving Attack Vectors and the Push for Resilient Infrastructure

Rising Sophistication in Remote Code Execution and Memory Corruption Trends

Recent shifts in the cybersecurity landscape reveal a growing emphasis on exploiting the very tools designed to facilitate connectivity. Adversaries are moving away from simple phishing toward complex memory corruption and logic flaws that grant immediate privilege escalation. By targeting administrative interfaces through Type Confusion and IDOR vulnerabilities, attackers can turn a compromised file server into a staging ground for wider infiltration. This trend underscores the urgent industry push toward Secure by Design principles, where security is baked into the native code rather than added later.

The shift in threat actor behavior highlights a sophisticated understanding of how native code execution risks can be leveraged to achieve persistence. By bypassing traditional perimeter defenses, attackers can operate within the shadows of legitimate administrative traffic, making detection significantly more difficult. Analyzing these patterns suggests that the industry must move toward more resilient infrastructure that can withstand even the most advanced remote code execution attempts. This involves a fundamental rethinking of how objects are handled and how memory is managed within critical software components.

Performance Metrics and the Growth Projection of Secure Gateway Solutions

The demand for hardened file transfer protocols is surging as organizations confront a landscape defined by threats with a 9.1 CVSS score. Market analysts project a significant increase in the adoption of secure gateway solutions that prioritize memory safety and strict access controls over simple convenience. Furthermore, the transition toward stable, long-term support distributions, such as Ubuntu 24.04 LTS, highlights a strategic move toward infrastructure that balances high performance with rigorous security patching. Data suggests that the cost of remediating a single breach now far exceeds the investment required for proactive infrastructure hardening.

Statistical outlooks indicate that enterprises are increasingly willing to sacrifice legacy compatibility in favor of modern, secure environments. This transition is driven by the realization that downtime and breach remediation for critical infrastructure components can lead to catastrophic financial and reputational loss. As secure gateway solutions evolve, they must provide the metrics and visibility necessary for administrators to prove compliance and security in real time. The growth of this market is a direct response to the increasing frequency and severity of remote code execution flaws in unpatched systems.

Confronting the Technical Debt and Exploitation Risks in File Servers

Navigating the complexities of patching legacy systems remains one of the greatest challenges for modern IT departments. Administrators often face the daunting task of updating mission-critical servers while maintaining 24/7 file availability for global operations that never sleep. This operational friction often leads to delays in applying vital security updates, creating a window of vulnerability that attackers are eager to exploit. The difficulty is compounded when dealing with software that has reached its end-of-engineering deadline, leaving it without the protection of official patches.

The specific challenge of Broken Access Control, as seen in CVE-2025-40538, illustrates how difficult it can be to detect unauthorized system-level account creation. Once an attacker gains the ability to create administrative accounts, they can operate with near-total impunity, making traditional monitoring tools less effective. Strategies for overcoming these risks must include a combination of automated patching and a more aggressive approach to retiring vulnerable legacy builds. Successfully managing this technical debt is the only way to ensure the long-term integrity of the enterprise perimeter.

Strengthening Defensive Posture Through Strict Compliance and Hardening

Strengthening the defensive posture of an organization requires more than just reactive patching; it demands a comprehensive approach to configuration management and adherence to global standards. Implementing robust Content Security Policy configurations serves as a vital shield against modern web-based threats like clickjacking that target the human element of administration. These defensive layers are essential for ensuring that even if a vulnerability exists, the impact is minimized by a hardened environment. Moreover, the move toward stricter web security reflects a broader industry trend toward protecting the administrative interface.

Adhering to global data protection regulations like GDPR and CCPA is no longer just a legal requirement but a fundamental part of risk management. By mitigating risks like Insecure Direct Object Reference, organizations can prevent the unauthorized access that often leads to massive data breaches. The importance of independent security research collaborations cannot be ignored, as these partnerships help maintain industry standards and build trust. A transparent approach to vulnerability disclosure and remediation is the best way to ensure that the entire ecosystem remains secure against emerging threats.

The Future of Managed File Transfer: Automation, Hardening, and Intelligence

The next generation of managed file transfer will likely be defined by the integration of intelligence and automation to stay ahead of sophisticated attackers. Forecasting models suggest that AI-driven anomaly detection will soon become standard, allowing systems to identify and block unauthorized root-level commands in real time. This evolution will likely see a transition toward memory-safe programming languages and stricter object handling within user interfaces to eliminate entire classes of vulnerabilities. Automation will play a key role in reducing the manual burden on administrators, ensuring that security remains a constant rather than a variable.

User interface security is also expected to undergo a significant transformation, moving toward more secure methods of interaction that minimize the risk of human error. Predicting the rise of automated patching cycles is a logical step in reducing the window of vulnerability for critical flaws that offer remote code execution. As these systems become more intelligent, they will be able to provide proactive recommendations for hardening and compliance, making it easier for organizations to maintain a secure posture. This shift toward intelligent, self-healing infrastructure represents the future of secure data mobility.

Securing the Enterprise Perimeter and Future-Proofing File Mobility

Securing the enterprise perimeter required a decisive shift toward modern, supported software builds to safeguard corporate integrity. The release of the Serv-U version 15.5.4 update functioned as a critical intervention for organizations facing systemic risks from remote code execution and unauthorized access. Administrators who prioritized the transition from vulnerable legacy builds established a more resilient foundation for future file mobility. This proactive stance was essential for maintaining the trust of partners and clients who relied on the security of the managed file transfer ecosystem.

The long-term outlook for SolarWinds and its users depended on the ability to maintain a compliant and secure file-sharing environment through continuous improvement. Recommendations for administrators focused on the immediate retirement of legacy versions that no longer received engineering support. By embracing the latest security enhancements and hardening techniques, the industry moved toward a more robust model of data protection. Ultimately, the lessons learned from addressing these critical vulnerabilities paved the way for a more secure and automated future in managed file transfer.