The rapid proliferation of sophisticated AI agents across corporate networks has introduced a formidable and stealthy security challenge, creating an environment where trusted automation tools can inadvertently become the most dangerous insiders. These AI systems, designed to streamline operations by accessing sensitive data and executing complex tasks on endpoints like laptops and servers, operate with a level of privilege that bypasses the very security frameworks built to protect the enterprise. Unlike traditional malware, which often reveals itself through recognizable signatures or anomalous behavior, these AI agents function with legitimate credentials, reading files, running scripts, and transferring corporate data as part of their intended purpose. This inherent trust model creates a vast, unmanaged attack surface, allowing for the potential misuse of AI tools to exfiltrate confidential information without triggering any of the standard alarms that security teams have come to rely on. In a decisive move to address this emerging vulnerability, cybersecurity giant Palo Alto Networks has announced its acquisition of Koi Security, a startup at the forefront of protecting against threats posed by these advanced AI agents.

The Emerging Threat of the Agentic Endpoint

Bypassing Traditional Defenses

The fundamental challenge posed by AI agents lies in their ability to operate under the radar of established security protocols, rendering decades of endpoint protection advancements largely ineffective against this new class of threats. Conventional security solutions, such as antivirus software and endpoint detection and response (EDR) platforms, are engineered to identify and neutralize threats based on known malware signatures or deviations from normal system behavior. However, AI agents do not fit this mold. They are not malicious code in the traditional sense; they are authorized applications granted extensive permissions to perform tasks that are often indistinguishable from legitimate business operations. An AI agent accessing a customer database, for example, could be performing a routine sales analysis or exfiltrating the entire file for a malicious actor. Because their actions are sanctioned, they are perceived as trusted insiders by the system, allowing them to traverse networks and access data without suspicion. This creates a critical security gap where the very tools meant to enhance productivity become potential vectors for catastrophic data breaches, operating with a level of autonomy and access that makes their malicious use exceptionally difficult to detect and prevent with legacy tools.

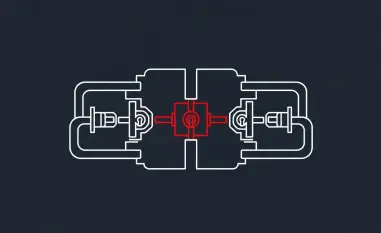

The attack vectors exploiting this security gap are as sophisticated as they are subtle, weaving malicious actions into the fabric of everyday developer and automation workflows. Attackers are no longer limited to exploiting software vulnerabilities; they can now leverage the inherent permissions of AI agents themselves. Techniques include authentication bypasses, where stolen API tokens are used to grant an attacker control over an agent, and API-driven remote code execution (RCE), which allows for the manipulation of an agent to run arbitrary commands on an endpoint. Furthermore, adversaries can engage in identity spoofing, mimicking the digital signature of a legitimate agent to carry out unauthorized activities without raising suspicion. Another potent method involves injecting malicious payloads through seemingly benign extensions, plugins, or even the AI model artifacts that are regularly updated and deployed. Because these threats are embedded within trusted processes and tools, traditional security controls, which are designed to police the boundary between legitimate and illegitimate activity, are effectively sidelined. The result is a new battleground where the fight is not against external intruders but against the potential weaponization of internal, trusted automation.

A New Paradigm for Endpoint Security

This evolving threat landscape signifies a critical inflection point for the cybersecurity industry, demanding a fundamental shift in how organizations approach endpoint security. The era of relying on static, signature-based scanning and reactive behavioral analysis is rapidly drawing to a close. As AI continues to automate a growing number of complex tasks, the focus must pivot toward a more dynamic, context-aware, and agent-specific model of runtime protection. This new paradigm requires a deep understanding of what AI agents are, what they are supposed to be doing, and what constitutes a deviation from their intended purpose, even if the actions themselves appear legitimate on the surface. Lee Klarich, Palo Alto’s Chief Product and Technology Officer, aptly characterized AI agents as the “ultimate insiders,” possessing immense power and access but critically lacking the traditional guardrails that govern human employees and conventional software. This observation underscores the urgency of developing security solutions that can not only monitor these agents in real-time but also enforce policies that limit their potential for misuse, ensuring they can be deployed safely and securely without compromising enterprise data. The acquisition of Koi Security is a direct acknowledgment of this necessary evolution in security strategy.

Strategic Integration and Future Outlook

Enhancing the Palo Alto Networks Ecosystem

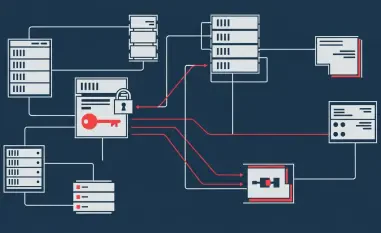

The integration of Koi Security’s specialized technology into the Palo Alto Networks product suite is poised to provide a comprehensive solution to the agentic endpoint problem. The technology is set to be woven into two of the company’s flagship platforms: Prisma AIRS and Cortex XDR. Within the Prisma AIRS platform, which is dedicated to securing AI-driven operations, Koi Security’s capabilities will offer real-time monitoring of AI agent behaviors, allowing security teams to understand precisely how these tools are interacting with sensitive systems and data. This will be complemented by automated policy enforcement, enabling organizations to define and apply granular rules that restrict high-risk actions before they can cause harm. Simultaneously, the integration with the Cortex XDR platform will significantly enhance endpoint visibility, providing a unified view of both traditional and agentic threats. This dual-pronged approach aims to deliver proactive threat prevention specifically tailored to agentic environments, moving beyond detection to actively stopping misuse. Ultimately, the goal is to equip organizations with the clear visibility and robust control they need to manage AI agent permissions and data flows effectively, transforming a critical vulnerability into a securely managed asset.

This acquisition represents more than just the addition of a new feature set; it is a strategic maneuver that positions Palo Alto Networks at the vanguard of securing the next generation of enterprise technology. By proactively addressing the security implications of AI agents before they become a source of widespread breaches, the company is demonstrating foresight and an understanding of the evolving cyber threat landscape. This move not only strengthens its own product portfolio but also sends a clear signal to the market about the importance of agent-aware security. As businesses increasingly adopt AI to drive efficiency and innovation, the ability to deploy these powerful tools safely will become a key competitive differentiator. Palo Alto Networks is betting that the demand for solutions that can secure AI-driven workflows will grow exponentially, and by acquiring a leader in this nascent field, it solidifies its role as a critical enabler of secure digital transformation. This strategic investment is aimed at ensuring that as enterprises embrace the future of automation, they can do so with confidence, knowing that the “ultimate insiders” are operating within a secure and monitored framework.

A New Chapter in AI Governance

The acquisition of Koi Security by Palo Alto Networks did not merely represent a business transaction; it marked the beginning of a new chapter in AI governance and security. The integration efforts focused on establishing a framework that could intelligently distinguish between benign and malicious agentic behavior, a challenge that required moving beyond simple rule-based systems. This initiative led to the development of advanced behavioral analytics that created a baseline of normal activity for each AI agent, allowing for the immediate detection of subtle deviations that could indicate a compromise. The successful deployment of these integrated solutions across early-adopter organizations provided a blueprint for the industry, demonstrating that the immense power of AI could be harnessed without creating unacceptable security risks. This move has since been recognized as a pivotal moment that accelerated the development of a more mature, security-conscious approach to AI implementation across the enterprise.