Malik Haidar is a veteran cybersecurity strategist who has spent years defending multinational corporations from sophisticated state-sponsored actors. With a deep background in threat intelligence and behavioral analytics, he specializes in bridging the gap between technical defense and business risk management. His recent work has focused on the evolution of stealthy backdoors and the ways modern attackers exploit trusted cloud infrastructures to remain hidden within corporate environments.

This conversation explores the shifting landscape of targeted cyberattacks, specifically focusing on the UAT-10027 activity cluster. We discuss the vulnerabilities of critical sectors like healthcare and education, the technical complexities of DNS-over-HTTPS as a concealment tool, and the sophisticated evasion techniques like DLL side-loading and NTDLL unhooking. Malik provides insights into how organizations can adapt their hunting strategies when traditional automated defenses are bypassed by high-level implants.

Education and healthcare institutions often operate with interconnected networks and legacy systems. How do these sectors prioritize defense against persistent backdoors, and what specific challenges do specialized facilities, such as elderly care centers, face when protecting sensitive data from targeted campaigns?

These sectors are uniquely vulnerable because a single infection can ripple through an entire ecosystem, as we see with universities that serve as hubs for multiple interconnected institutions. When an attacker gains a foothold in such a network, they are essentially looking for the path of least resistance to escalate their access. In specialized facilities like elderly care centers, the challenge is even more acute because these environments often lack the robust, 24/7 security operations centers found in larger corporate entities. Protecting sensitive resident data requires a shift from reactive patching to proactive isolation of critical systems, especially since these facilities are now being targeted by campaigns like UAT-10027 that use sophisticated scripts to stage multi-stage malware. Defenders must realize that these aren’t just random infections; they are calculated moves to establish a long-term presence within networks that are often under-resourced and over-connected.

Modern malware frequently utilizes DNS-over-HTTPS to hide command-and-control communications within legitimate encrypted traffic. What strategies should network administrators use to detect this behavior without breaking privacy protocols, and how does this technique complicate the effectiveness of traditional DNS sinkholes?

The use of DNS-over-HTTPS, or DoH, is a game-changer for attackers because it effectively blinds traditional network security infrastructure. By wrapping command-and-control instructions in standard HTTPS traffic directed at trusted global IP addresses like Cloudflare, the malware makes its heartbeat look identical to a user browsing a reputable website. This renders traditional DNS sinkholes almost entirely useless, as there is no clear-text DNS query for the security tool to intercept or redirect. To combat this without violating privacy, administrators need to move away from looking at the content of the packets and start looking at the behavior of the connection, such as the frequency, timing, and size of the encrypted bursts. Implementing TLS inspection in a controlled manner or using endpoint-based telemetry to identify which specific process is initiating the DoH request is often the only way to unmask this activity.

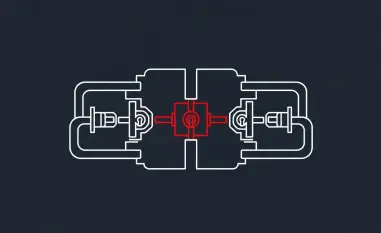

Attackers are increasingly using DLL side-loading through trusted Windows executables like Fondue.exe or ScreenClippingHost.exe to execute malicious payloads. How can security teams differentiate between legitimate system processes and hijacked ones, and what steps are necessary to harden environments against these side-loading techniques?

DLL side-loading is particularly insidious because it piggybacks on the trust we place in legitimate Windows binaries. When an administrator sees a process like “mblctr.exe” or “ScreenClippingHost.exe” running, their first instinct isn’t usually suspicion, which is exactly what the threat actors behind the Dohdoor backdoor are counting on. Differentiating between a clean process and a hijacked one requires looking for “unsigned” or “unexpected” DLLs being loaded from non-standard directories, such as a batch script dropping a file named “propsys.dll” into a temporary folder. Hardening against this involves strict application control policies and monitoring for “orphaned” processes where a legitimate executable is running from a location it shouldn’t be. Security teams should also focus on the parent-child relationship of processes; for example, a screen clipping tool should never be the parent process for a PowerShell script or a network connection to an external staging server.

Advanced implants often unhook system calls in NTDLL.dll to bypass endpoint detection and response (EDR) solutions that monitor API calls. What are the practical implications for organizations relying solely on automated monitoring, and how can defenders adapt their hunting strategies to identify such evasion tactics?

When an implant like Dohdoor unhooks system calls in NTDLL.dll, it is essentially performing “digital surgery” to blind the EDR’s eyes. Most EDRs work by placing “hooks” on common API calls to see what a program is doing, but if the malware removes those hooks, the EDR sees nothing, even while the malware is reflectively loading a Cobalt Strike Beacon into memory. This means that organizations relying solely on automated alerts are in a dangerous position because the very system designed to protect them is being bypassed at the kernel or user-mode level. Defenders must adapt by hunting for “integrity violations” in memory, searching for code sections that have been modified after the process started. It becomes a game of monitoring the monitors—using lower-level tools to verify that the standard security hooks are still in place and functioning as intended.

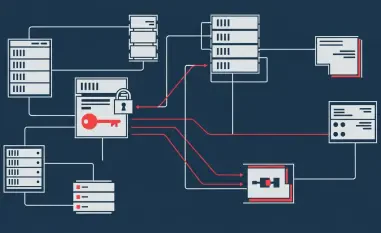

Threat actors often route traffic through global cloud infrastructure to mask their origin and blend into normal outbound traffic. When technical overlaps suggest involvement from established state-sponsored groups, how should organizations adjust their threat models to account for shifts in typical victimology or targeting patterns?

The overlap between UAT-10027 and known North Korean groups like Lazarus or Kimsuky suggests that threat actors are becoming more flexible in their targeting. While Lazarus historically focused on cryptocurrency and defense, we are now seeing these same technical “fingerprints,” like the LazarLoader patterns, appearing in attacks on healthcare and education. Organizations need to stop assuming they aren’t a target just because they don’t fit a group’s historical profile; if you have data, a network, or a connection to a larger target, you are in the crosshairs. Threat models should be adjusted to focus on the “how” rather than the “who,” prioritizing defenses against the specific techniques—like routing traffic through Cloudflare—rather than waiting for an attribution report. This shift in victimology shows that these actors are opportunistic and will pivot their infrastructure to wherever they find a viable entry point.

What is your forecast for the evolution of DoH-based malware campaigns?

I expect that DoH-based campaigns will soon become the standard rather than the exception for sophisticated actors, as it is simply too effective at bypassing the current generation of network defenses. We will likely see malware that doesn’t just use one DoH provider, but rotates through dozens of them to prevent any single IP range from being blocked. Furthermore, as more legitimate applications adopt DoH by default, the “noise” of this traffic will increase, making it even easier for backdoors like Dohdoor to hide in plain sight. For readers, my best advice is to focus on endpoint visibility; since the network is becoming increasingly dark due to encryption, your ability to see what is happening inside the memory of your workstations and servers is your only remaining line of sight. Establishing a baseline of normal process behavior now is the only way you will be able to spot the anomalies of tomorrow.