Modern enterprises are no longer just experimenting with chatbots but are deploying fully autonomous agents capable of managing internal databases and executing complex API calls across diverse cloud environments. This shift represents a fundamental change in the digital landscape as Large Language Models transition from isolated text generators to integrated production engines. While these advancements drive efficiency, they simultaneously introduce a sophisticated class of threats known as agentic prompt injection. HackerOne recently launched its specialized testing service to address these vulnerabilities, signaling a pivotal moment for cybersecurity. The initiative focuses on the systemic risks that arise when AI systems gain the power to interact with the physical and digital world through downstream tool execution.

The High-Stakes Evolution of Production AI and the Rise of Agentic Risks

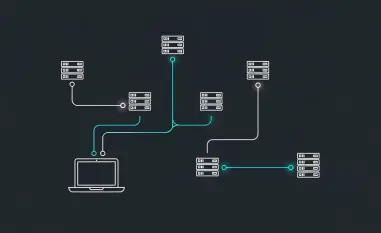

The journey from experimental prototypes to production-ready AI agents has fundamentally altered the corporate attack surface. In earlier iterations, a prompt injection might only result in a model generating inappropriate text or breaking its persona. However, current agentic systems are often connected to sensitive retrieval pipelines and external execution environments, meaning a successful exploit can lead to unauthorized data exfiltration or the manipulation of critical business processes.

Industry leaders and security researchers are now collaborating to build more secure integration points for these autonomous workflows. The complexity of these systems necessitates a move beyond simple perimeter defenses. Because agentic AI operates with a degree of operational autonomy, the significance of a breach extends far beyond the model itself. Protecting enterprise data requires a comprehensive understanding of how an injection can propagate through an entire interconnected network.

Tracking the Surge in Adversarial Exploitation and AI Security Adoption

From Static Guardrails to Multi-Turn Testing: Emerging Defense Strategies

Traditional input filtering and static guardrails are increasingly proving insufficient against the creativity of modern adversaries. Organizations are moving toward structured, multi-turn adversarial scenarios that simulate real-world interactions. This methodology allows security teams to test how a model maintains its integrity over a prolonged conversation rather than relying on a single-shot filter.

The focus has transitioned from monitoring hypothetical model behavior to conducting tangible risk validation in live environments. Continuous red teaming has emerged as the preferred strategy for keeping pace with evolving tactics. By simulating sophisticated attacks, businesses can identify the precise moment an agent fails to distinguish between a legitimate command and a malicious injection hidden within a data retrieval.

Quantifying the Threat: The Exponential Growth of Validated LLM Vulnerabilities

Market data reveals a staggering 540 percent year-over-year increase in reported prompt injection vulnerabilities, highlighting the urgency of the situation. As businesses move beyond experimental phases, the demand for specialized AI security services is projected to grow exponentially. This surge reflects a maturing market where leaders recognize that model accuracy alone is no longer a sufficient metric for success.

Performance indicators for AI resilience are shifting toward security-first benchmarks that prioritize robustness against manipulation. Organizations are now allocating significant portions of their technology budgets to ensure that their autonomous systems do not become liabilities. The ability to quantify these risks through validated vulnerability reports has become essential for maintaining investor confidence and operational stability.

Bridging the Security Gap Between Theoretical Risks and Operational Realities

Standard model-level safeguards often fail when faced with tool-aware injection attacks that exploit the logic of the underlying application. Securing retrieval-augmented generation (RAG) and external API workflows involves overcoming layers of complexity that traditional security tools were not designed to handle. A model might be perfectly safe in a vacuum but becomes a high-risk asset once it is granted the ability to read and write to a corporate database.

Strategies for identifying confirmed exposure involve analyzing how interconnected systems respond to malicious inputs across the entire chain of command. Identifying these gaps before an actor exploits them is the primary challenge for modern security teams. Effective defense requires a deep integration of security protocols at the architectural level rather than as an afterthought or an external wrapper.

Navigating the Emerging Standards for Secure and Trustworthy AI Systems

Organizations such as the Coalition for Secure AI (CoSAI) are playing a vital role in establishing industry-wide security benchmarks. These standards provide a framework for high-profile sectors like finance and healthcare to deploy AI at scale while meeting strict compliance requirements. By adhering to these emerging guidelines, enterprises can build a foundation of trust with their users and regulatory bodies.

Establishing a baseline for enterprise risk management now requires rigorous adversarial validation as a standard operating procedure. These benchmarks help distinguish between platforms that are merely functional and those that are truly resilient. As regulatory pressure increases, the adoption of these standardized security practices will likely become a prerequisite for operating in the global digital economy.

The Road Ahead for Autonomous AI Security and Continuous Exposure Validation

The future of AI as an autonomous operator suggests a permanent need for persistent security monitoring. As models gain more influence over decision-making processes, the potential for silent failures increases. Balancing the rapid deployment of innovative features with a robust defensive architecture remains a primary concern for Chief Information Security Officers.

Future adoption of AI red teaming will likely be driven by both global economic conditions and increasing regulatory scrutiny. The transition toward autonomous workflows demands a security posture that is just as dynamic as the technology it protects. Proactive exposure management will be the defining characteristic of successful AI implementations as the technology continues to mature.

Hardening the Enterprise: Why Adversarial Resilience Is Now a Business Necessity

The transition to agentic AI necessitated a move from theoretical safety to end-to-end validation across all integrated systems. Organizations that invested in long-term security found that adversarial resilience provided a competitive advantage by fostering innovation within a trustworthy framework. Proactive exposure management became the standard for securing autonomous workflows and protecting sensitive enterprise assets from increasingly clever injection tactics.

Securing the future of these systems required a fundamental shift in how businesses viewed the relationship between AI capability and defensive architecture. Successful leaders integrated continuous testing into their development lifecycles, ensuring that their agents remained robust in the face of evolving threats. This commitment to rigorous validation ensured that the move toward autonomy did not come at the expense of corporate security or data integrity.