The discovery of a critical security vulnerability within the Cursor Integrated Development Environment has sent shockwaves through the developer community, exposing how modern AI-native coding tools can be weaponized against the very users they aim to empower. This specific flaw, identified by security researchers as CursorJack, centers on the exploitation of the Model Context Protocol (MCP) deeplinks, which are custom URL schemes designed to simplify the installation of MCP servers. By manipulating these links, attackers can embed malicious configuration data that, when processed by the IDE, leads to local code execution or the installation of unauthorized remote servers. This vulnerability highlights a significant architectural blind spot where the speed of innovation in AI-assisted development has outpaced the implementation of traditional security boundaries. While the IDE provides prompts for installation, the underlying mechanism fails to verify the integrity or origin of the server configuration, leaving the gateway open for exploitation through links.

Exploiting the Human Element in Development

A central theme in the analysis of CursorJack is the systematic exploitation of the human factor within the software engineering workflow. Developers are increasingly conditioned to interact with high-frequency prompts and automated configuration updates as they integrate sophisticated AI models into their daily coding routines. This psychological habituation creates a massive surface for social engineering, as a developer might perceive a malicious MCP installation request as a routine update or a necessary extension for a new project. Because developers typically operate with elevated system permissions to manage dependencies and local environments, a successful breach through the IDE often grants an attacker direct access to sensitive assets such as proprietary source code and cloud environment API keys. The absence of a robust verification layer means that the responsibility for security is shifted onto the individual user, who may lack the specific context required to distinguish a legitimate server configuration from a deceptive one.

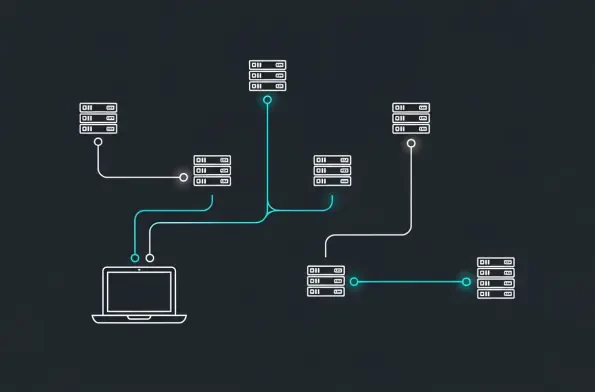

Technically, the vulnerability exploits the way the Model Context Protocol interacts with the operating system’s handling of custom URI schemes. When a user clicks a specially crafted link, the IDE is triggered to initiate an installation sequence that bypasses traditional web-based security filters. This process allows for the execution of arbitrary commands if the configuration points to a local executable or a remote server controlled by a malicious entity. The core of the issue resides in the lack of a trust-but-verify model, where the IDE implicitly trusts the payload of the deeplink once the user provides a single point of interaction. Researchers noted that the prompt shown to the user often lacks the technical granularity needed to identify hidden command-line arguments or suspicious remote endpoints. This lack of visibility ensures that even a cautious developer might inadvertently authorize a persistent backdoor, as the interface prioritizes a frictionless user experience over the rigorous validation protocols that are standard in more mature software.

Redefining Security for the AI Tooling Era

Addressing the risks posed by CursorJack required a fundamental shift in how AI-integrated development environments manage external configurations and protocol extensions. Security experts argued that the MCP ecosystem necessitated architectural improvements that moved beyond simple user consent prompts. Implementing stricter permission controls for command execution was a primary recommendation, alongside the introduction of a centralized registry for verified MCP sources. Such a system allowed the IDE to cross-reference installation requests against a database of trusted providers, effectively neutralizing the threat of unverified third-party links. Furthermore, enhancing the visibility of installation parameters within the user interface allowed developers to see exactly what commands were being executed and which remote servers were being contacted. By embedding these defensive measures directly into the framework, the industry ensured that the adoption of productivity-enhancing AI tools did not come at the expense of local machine security or corporate property.

The emergence of the CursorJack vulnerability served as a critical turning point for the development of secure AI tooling throughout the latter half of 2026. Organizations that proactively audited their development environments and restricted the use of unverified URI schemes successfully mitigated the most immediate risks. Security teams implemented more rigorous monitoring of IDE outbound connections and established internal protocols that required the manual vetting of all MCP servers before they were deployed across engineering departments. This shift toward a zero-trust model for development tools emphasized that even the most innovative software required traditional security rigor to remain viable in a professional setting. Ultimately, the lessons learned from this incident prompted a broader industry movement to standardize security protocols for all AI-native IDEs, ensuring that future advancements in coding assistance remained resilient against sophisticated social engineering and automated exploits by modern attackers.