The Rapid Escalation of AI Infrastructure Threats

The emergence of CVE-2024-33017 represents a watershed moment for the security of AI-driven development frameworks, signaling a new era of risk for modern software. Langflow, a highly popular open-source tool with over 145,000 GitHub stars, recently became the target of a high-velocity exploitation campaign that underscores the fragility of the modern AI supply chain. This vulnerability allows for unauthenticated remote code execution, granting attackers the ability to run arbitrary commands on a host system with minimal effort. By examining the timeline of this exploit, we can better understand the shrinking window between software patching and active exploitation. This analysis serves to highlight how sophisticated threat actors leverage public advisories to target mission-critical AI infrastructure before organizations have the chance to secure their environments.

Chronological Progression of the Langflow Exploitation

Mid-2024: Discovery of the Unauthenticated RCE Flaw

Security researchers identified a critical defect in the Langflow POST endpoint used for creating public flows. The core of the issue resided in the application’s failure to properly sandbox Python code within node definitions. This oversight meant that an attacker could bypass standard database storage logic by injecting a malicious “data” parameter. Because the vulnerability required no prior authentication, it essentially left a front door open for any actor capable of sending a single, well-crafted HTTP request to a vulnerable Langflow instance.

Late-2024: Patch Release and the Start of the Exploitation Clock

The developers of Langflow released version 1.1.1 to address the vulnerability, providing technical details regarding the fix. Almost immediately, the race between defenders and attackers began. Despite the absence of a public Proof of Concept on platforms like GitHub, the technical advisory provided enough context for sophisticated threat actors to reverse-engineer the flaw. Within a mere 20 hours of the patch becoming available, cybersecurity monitoring tools recorded the first signs of active exploitation attempts in the wild.

Phase 1: Mass Automated Scanning and Discovery

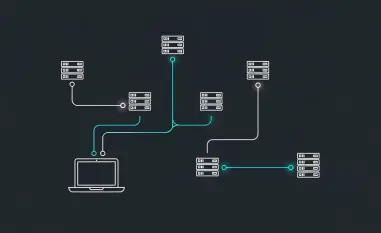

The initial stage of the attack involved widespread, automated reconnaissance. Threat actors utilized a global network of IP addresses to scan the internet for accessible Langflow instances. The goal of this phase was to build a comprehensive list of targets that had not yet upgraded to the patched version. These scans were characterized by their speed and breadth, illustrating how quickly modern botnets can identify specific software versions across the global IPv4 space.

Phase 2: Targeted Reconnaissance and Infrastructure Staging

Once vulnerable targets were identified, the attackers transitioned to a more focused approach. Using pre-staged command-and-control infrastructure, they began probing the identified instances to confirm exploitability. This phase was crucial for the attackers to ensure that their payloads would execute correctly within the specific environments of their victims. The overlap in the digital signatures of this infrastructure suggested that a single coordinated group or a unified toolkit was behind the global campaign.

Phase 3: Data Exfiltration and Credential Harvesting

The final and most damaging phase of the timeline involved the execution of the RCE payload to exfiltrate sensitive information. Attackers specifically targeted environment variables, API keys, and database credentials stored within the Langflow environment. By securing these keys, the threat actors moved beyond simple system compromise to gain unauthorized access to connected cloud services and internal databases. This stage transformed the incident from a localized software vulnerability into a significant supply chain threat.

Analyzing the Impact and Emerging Patterns in AI Security

The most striking takeaway from this timeline is the incredible speed of the transition from patch release to active threat. The 20-hour window demonstrates that the “n-day” exploit cycle is faster than many IT departments can manage via traditional patching schedules. Furthermore, this event highlights the “Advisory-as-a-Blueprint” trend, where detailed security notes intended for defenders are effectively used by attackers to build functional exploits. The pattern of exploitation—moving from automated scans to surgical credential theft—suggests that AI tools are now primary targets for corporate espionage and broader lateral movement within enterprise networks.

Regional Variations and the Future of AI Sandbox Integrity

While the attacks were global in nature, the impact varied based on how organizations deploy their AI agents. Installations that were exposed directly to the internet without a reverse proxy or VPN were the first to fall. A common misconception in the AI community is that internal tools do not require the same level of hardening as customer-facing applications; however, this incident proved that the interconnected nature of AI frameworks made them high-value targets regardless of their internal status. Experts suggested that the future of AI framework security must move toward “secure by design” principles, specifically focusing on mandatory sandboxing for all user-supplied code to prevent similar RCE vulnerabilities from manifesting. Moving forward, teams prioritized the use of runtime security monitoring to detect anomalous code execution in real-time. Organizations also adopted more aggressive patching cadences for AI infrastructure to stay ahead of the rapid exploit development cycle observed in this case.